In the age of digital analytics, which is constantly spewing out enough numbers to make your head spin, it is easy to fall into the trap of thinking you “really” understand your users. We have our standard page view data, exact spots where people are clicking, or even more abstract data collection methods such as heat maps or where people are concentrating their mouse on the page or session recording. With all the measurement tools present in the marketer’s toolbox these days, you can feel so well equipped with numbers, that directly talking to current or prospective customers seems unnecessary or unreliable (numbers do not lie but people do). To be clear, I would consider myself an analytics junkie, so not downplaying the importance of these tools.

Where people seem to have a disconnect is understanding the gap that lies between the numbers, and why people are doing a certain action. Often times it can be tough to understand user motivations without talking to them. Even when a test variation performs well, there can be a lot of assumptions or misunderstanding why it did well.

It was not until I started incorporating voice of customer data into testing analysis/strategy, that a clearer picture of a user’s thought process behind their behavior arose. From there, we can actually start to build a holistic strategy to optimize our experiences for users. This VOC data was often a genesis for many test ideas or a catalyst for follow-up experiments.

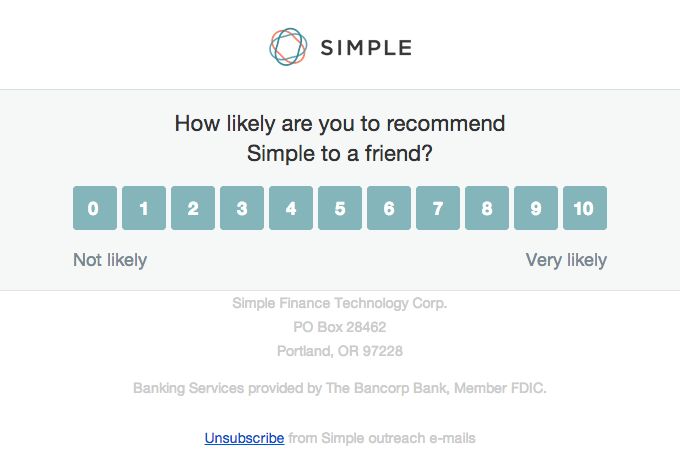

There are a wealth of options when it comes to customer feedback methods. You can collect NPS score information, which can help understand customer sentiment over time, but struggles to give very specific information on optimization opportunities. Some services allow user feedback directly on specific pages which can lend actionable information, but can be filled with ranting people or tough to find consensus on issues. Below are VOC different collection methods with my thoughts based on experience learning what customer feedback methods can work well augmenting your CRO strategy.

1) On page/website user feedback tools

Engaging users when they are in the moment can be a great way to receive candid feedback, or even idea generation direct from your target audience. Gathering page scores, NPS, short-answer responses, or even multiple step feedback surveys can be easily accomplished now with many accessible tools on the market.

What I have seen work well:

- Collecting user feedback on/at struggle points identified by analytics — this can be a great way to understand issues with the page that are not obvious as you stare at the site every day. On page feedback can sometimes highlight issues people are having with a particular process, which can impact conversions later on in the funnel. For example, an unclear product page can not only impact add to cart rates, but customers propensity to complete a purchase post cart addition. If you see a page that is doing poorly in the user journey, carefully ask your users, and might be surprised with the responses

- NPS scoring (or similar) — in particular, this can work well when looking at the long-term impact of a change. For example, one e-commerce company I worked with was interested in the impact of different free shipping transit times. While we were concerned in the immediate conversion impacts of offering slower free shipping, just as important we monitored NPS and survey feedback of customers in different test populations to see if there might be some long-term reputation and repeat purchase impacts. Important to note, some survey/feedback companies have developed their own alternatives to NPS, as many feel NPS is naturally biased to overstating negative sentiment.

- Surveys centered around understanding what appeals/excites users — many companies, in my opinion, do a terrible job communicating a good value proposition. With many companies, there can be a disconnect in what they think users value and what they really do. These responses can help you craft that value proposition, but also provide you guidance on what you want to make sure to clearly communicate to users

Some of the many tools to easily get you started:

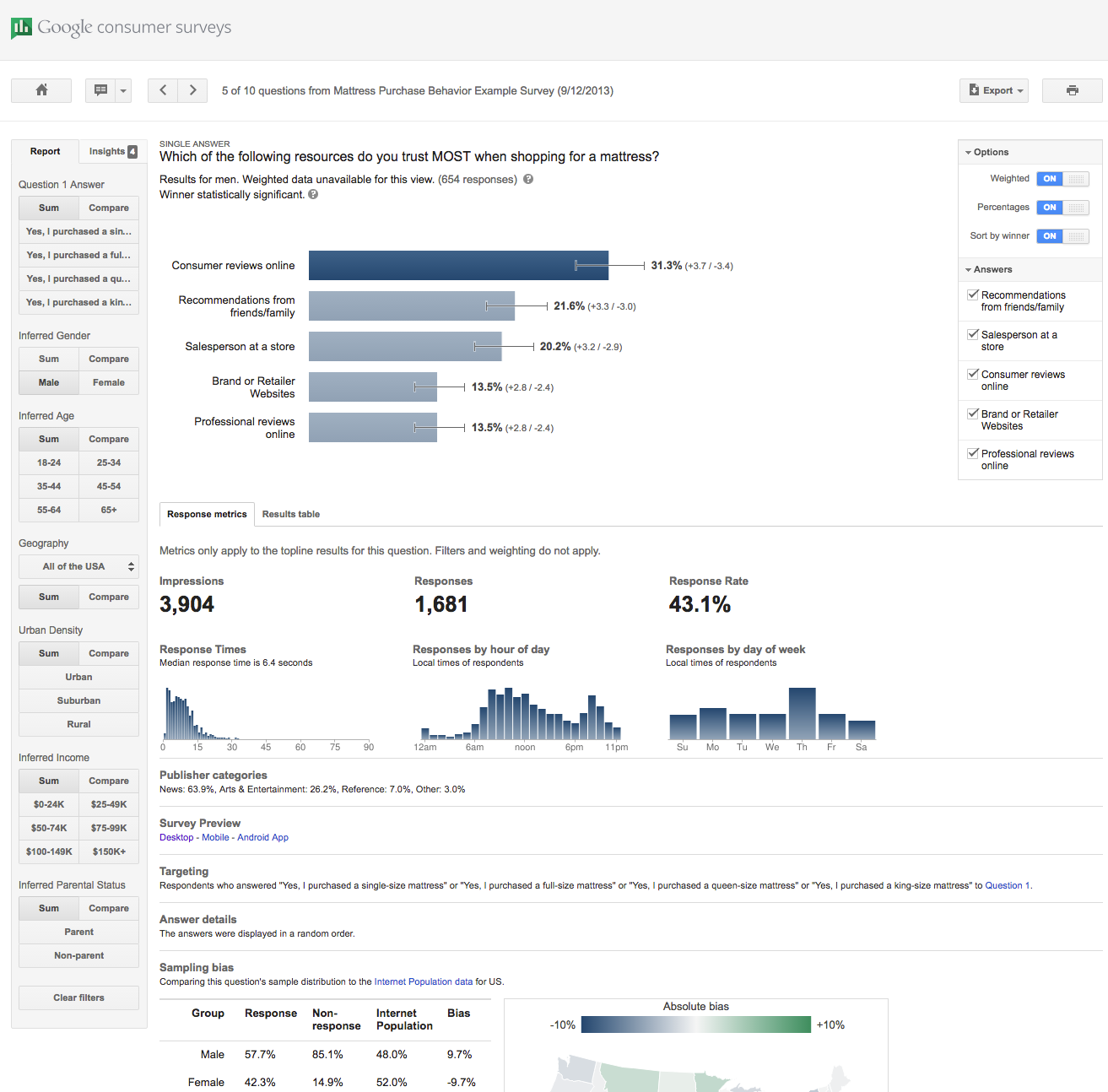

Would like to give a specific shout out to Google Consumer Surveys, if you have not tried the product. Not only do they allow survey collection on your site, but you can syndicate your survey to many websites running GCS surveys as a way to monetize their traffic. Additionally, they have a healthy mobile presence with their Opinion Survey panel apps. While the product UI can make you want to pull out your hair, one of the best items with their product is marrying up respondent data with DoubleClick demographic information and many reporting options (including short answer intent grouping). There is a wealth of information you can glean from this service and it is shockingly affordable.

Google Customer Survey Reporting

Slight rant about getting greedy with survey question amount: time and time again I have seen companies go overboard with the questions and requirements of users, just to provide you feedback…for free. I am sure we have all experienced, where we were feeling a bit generous with our time and think, sure I’ll do a survey, then hit with a 25 question screen in micro-font. While there is a science to user feedback, guard very carefully about going too far in what you are asking from users, not only because you are going to affect your response rate, but it also biases the responses to a particular type of individual that will tolerate that amount effort.

2) Guided Panels:

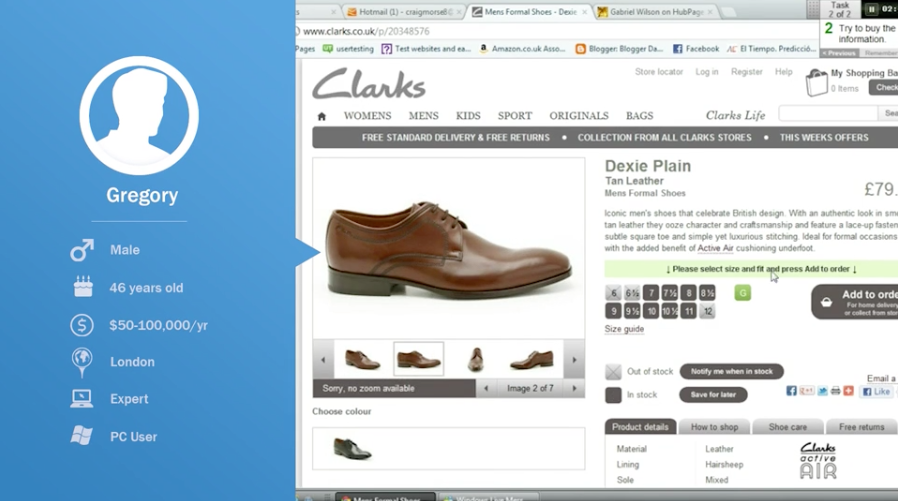

Guided panels can mean many things to different people. Whether panel members are located on site in a specific room, working remotely monitored with a set of instructions, or even picking people off the street, needless to say, you have options. While not the cheapest option, guided panels can be a great way to collect very specific feedback from users in either a structured or unstructured panel environment. This data can also be coupled with eye tracking, or a host of other physical aspects, along with being able to ask deeper questions about emotions, concerns, etc about their interaction with your web experiences. Personally, I have observed some great optimization insight come out of these conversations that have lead to home-run tests. With these panels, you can not only gain great insight into what aspects are frustrating, but also what people expect as part of their visit, but they are not getting. In one panel conducted for an e-commerce company, we were able to gather some solid feedback into catalog filtering and navigation testing prototypes we were considering to run, before exposing to entire site population.

Couple points would emphasize based on past experience:

- Be careful of small panel samples. Can understand it can become expensive to run these, but I have seen first hand the issues if you are just surveying a few panelist. The main problem that can arise is chasing ghost “issues” that a panelist or two communicated, but does not really impact the larger site audience.

- Speak with an expert on structuring the panel and questions. There is an intersection of art and science when it comes to paneling, and guided panels can quickly go off the rails, or result in misrepresentative data. It is worth the investment to speak with an experienced paneling company or consultant on best practices when it comes to surveying question construction/verbiage and panelist selection to ensure the results can be relied upon but also result in representative data.

3) Internal Company Resources:

Embarrassingly enough, it took me a while in my CRO career to fully realize the benefit of engaging the people within the company that directly interface with customers most frequently, such as Customer Service and Sales Teams. Establishing feedback loops into these departments can reveal a treasure trove of testing ideas. These individuals can hold key information with what is resonating, or confusing customers; which can often be absent from traditional analytics. At a past retail company I worked with, we used an on-page feedback tool, Qualaroo in this case, restricted to internal customer service access, so agents could submit ideas or issues directly on the page.

We could then pull a report from Qualaroo of all the submitted issues/ideas and be able to sort by a wealth of attributes (including page submitted on). We would typically prioritize based on how easy to address, number of users affected, impact to bottom-line, and number of times reported.

A couple things to be careful of here is responding to every little issue (remember you have limited testing time/resources), and letting the loudest employee or customer dictate what to focus on.

The keys to success here are:

- Educate internal people what testing can do (should be doing anyways)

- Communicate the process

- Celebrate co-workers as heroes when their idea leads to a win!

Wrap-up:

Now that I have walked you through the most valuable VOC tactics I have seen related to optimization efforts, it is time for you to start talking to your customers (or prospective ones). First, you might want to poke around to see if some of these efforts are underway, because many companies are, let’s face it, not good at communication. If no VOC efforts are underway, then maybe start with the basics of collecting simple on-page comments or page feedback elements. Also, set up internal feedback channels with employees who interface with customers on a regular basis. Once you have a solid handle on processes, then you can move into more advanced tactics such as NPS and even guided panels. Remember, do not buy a Ferrari only to drive it around a parking lot. Establish process and understanding, then go all out.

Need More Personalized Help?

Feel free to reach out if unsure where to start, or not getting the results from VOC efforts your company expected. Contact Us