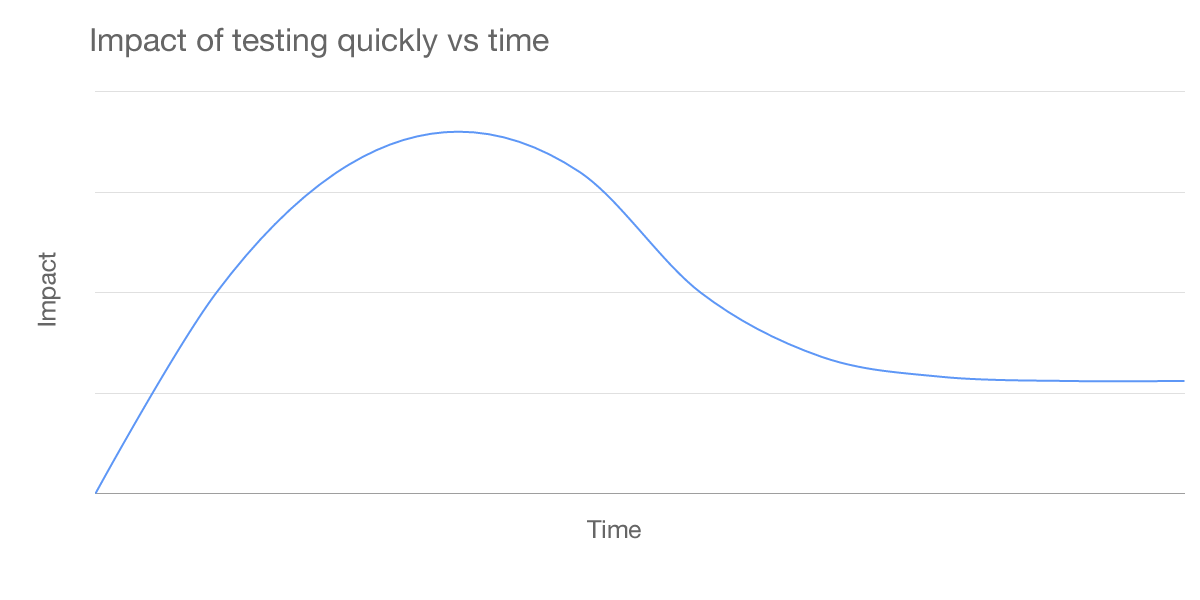

There’s no doubt that testing quickly has great advantages for an immature and agile marketing program. Being able to quickly ideate, design, launch, and analyze tests can have a huge impact on growth, even exponentially so. But what happens when the simple and easy tests are done?

The more you test, the less effective quick tests become.

The impact of testing quickly diminishes over time, because there are only so many easy and quick tests to run which actually make much of an impact. There simply comes a point where the easy tests have already been done and effort must be focused on the harder tests. If the focus is not shifted, testing will become mired in tests with flat results, tests with little to no applicable learnings, or losing tests.

What testing quickly means

To be clear, testing quickly usually translates pretty directly with testing easy things. No matter how experienced and skilled a testing team is, quick tests must be easy ones. That’s because at least one of the three biggest time sinks for testing — ideation, development, and analysis — will comparatively take much longer for hard tests. Usually one or more of them will take exponentially longer to execute than an easy test. Where a simple landing page test can be developed in 5 hours, a checkout path test may take 50. Analysis of a new signup form test could take 10 hours, while analysis for a product pricing test may take 100 hours and several team meetings.

Testing quickly means sacrificing quality and impact for quantity — you can’t win the testing game with quantity.

Moving too quickly results in missed insights, poor planning, missed opportunities, dysfunctional tests, and just simply — mistakes. The last thing a tester wants to hear after a test has been run is they missed something obvious. There are many moving parts in testing and moving quickly results in mistakes and wasted tests.

To understand testing quickly, we must understand the motivation

Why is it that testing and iterating quickly has become so important to testing in particular? Most other marketing and development related initiatives don’t move quickly at all. For those projects, time to market can be on the order of months, sometimes years. But many expect testing to take tests full cycle in weeks. Two of the primary causes of this disconnect are the focus on the launch and a lack of understanding testing basics.

Non-testing related projects in an organization are focused entirely on launch. Launch the landing page, launch the new product page, launch a new feature, launch a new marketing program; it’s the launch that’s important.

Launching is the end in those cases, but launching is just the start for a test. In most cases, testing is changing something which has already been launched. And changing something that’s already there is usually pretty quick — just submit a tech ticket and the change goes out with next week’s push. It’s just not the same with testing.

Understanding the basic mechanics of testing is the other primary source of frustration. Tests require background research, analysis, user panels, benchmarking, designing and developing multiple versions, proper metric identification and collection, QA, and finally in-depth after-the-fact analysis to determine a winner. Most people outside of testing are unaware of much of this process and drastically underestimate the time each step takes.

We’re also not helping ourselves

What’s also not helping the case for testing slowly is all of the recent buzz testing has gotten lately. Demos from testing platforms which showcase launching tests in 5 minutes and case studies lauding the results of quick and dirty tests ultimately only make it harder for those running testing programs. Those outside the know see those things and expect them for every test. Unfortunately, it’s never that easy. Those testing tool demos don’t show the extensive metrics set-up beforehand, the limitations of the visual editors, and the in-depth analysis that comes after the test has run. The case studies showcasing 100% increases in conversion from simple headline and button color changes don’t talk about the questionable math behind the claims.

It doesn’t do any good to oversimplify testing and exaggerate the results.

Simplification may help to get initial buy-in to the concept of testing, but it does so at the cost of making continued efforts difficult and sets the stage for disappointment.

Testing slowly is the way to go

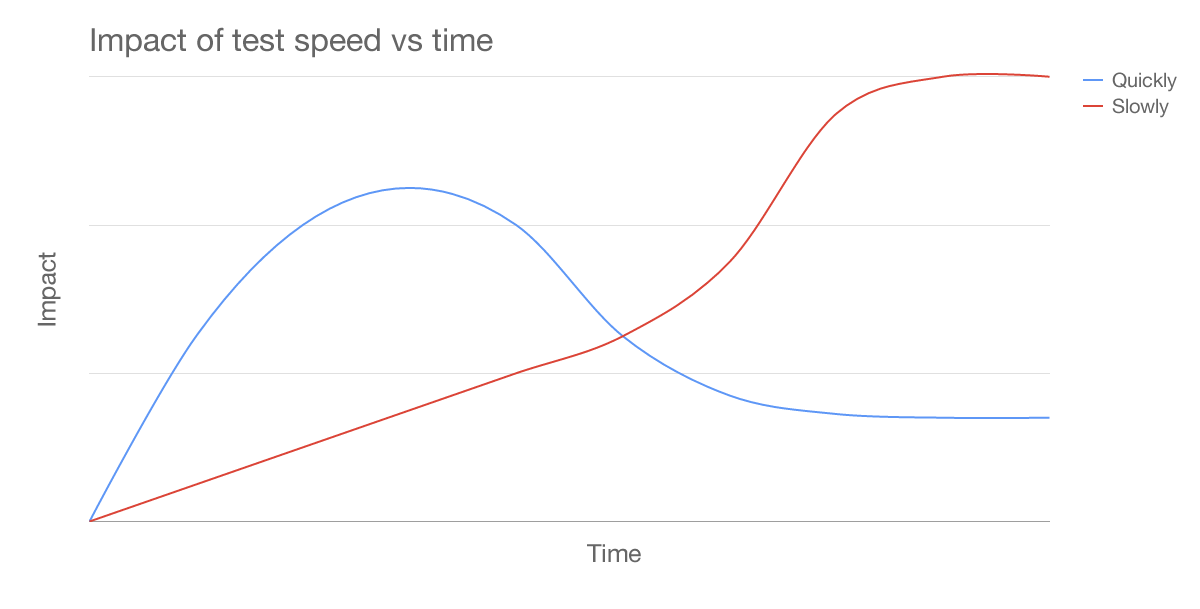

In a mature testing program, slow testing is the only way to ensure consistent and business significant results. Testing slowly allows for deep analysis into user behavior, tests which drastically change the experience, and tests which have been thoroughly thought-out and executed with purpose.

As the age of a testing program increases, so does the impact of testing slowly.

The relationship of the impact of a test versus how long a business has been testing. Over time, the impact of testing slowly, increases.

Traction with slow testing

The massive difference between easy and hard (quick and slow) is difficult to communicate to people not directly involved in testing. Especially since most people’s first experience with testing is when a new testing program begins with huge successes and quick-wins. Those will not continue and the danger is that both confidence and enthusiasm for testing wanes.

People who aren’t in the trenches running tests think all tests should be quick and easy.

Slowing the testing cadence can be the death knell of a testing group. When tests used to take a week and then start to take months, people stop caring about tests. So how do you keep interest and traction when testing inevitably slows down?

Slow testing must be methodical in its approach and execution. That doesn’t mean that tests must always hit launch deadlines and be reported out by the first of every month — testing simply can’t be that reliable and there are always delays. It does mean that engagement must be continuous and regular between the testing teams and the rest of the business.

Unfortunately, it’s really hard to be methodical and continuous in testing. Some tests have massive improvements and end earlier than expected, others linger and run flat for months. A checkout path test may get developed in a week, a similar test may take a month and then be trashed because of pushback from another department. With those in mind, how is it possible and what does a methodical testing program look like?

Diversified lines and continuous, albeit slow, progress

A good testing strategy dictates having several lines of testing which are continually moving, each line focused on a department or product. At any one point across the different lines for example, there may be ten tests in active ideation, three tests in development, four tests running, and five tests in analysis. Each line however may only have a test or two in ideation, one running, and one being analyzed. This method effectively diversifies the testing portfolio and ensures continuous progress.

A diversified testing program accepts and plans for: losing tests, tests which take longer than expected to develop, tests which get shut down by department pushback, and tests which take longer than expected to validate. In an un-diversified testing program, those things could completely halt testing and send the team back to the drawing board.

The diversity in the testing program isn’t limited to lines of business, it applies to the types of testing too. What this means to a particular testing team differs, but the a typical source of diversity here is based on test goals and test metrics. Organizational goal changing, seasonality, new hires, customer demands, and simply shifting focus can all mean throwing all of the tests in the pipeline away and having to start over without diversification. With it, you simply drop the irrelevant tests and continue moving forward with the others. Diversification makes testing agile in the sense that changes can be taken in stride and testing slowly can continue.

TL;DR — Test slowly and methodically.

Quick wins are for new testing programs — there are only so many and most are bunk after deeper analysis. Testing slowly and methodically is where the truly business transformative conversion increases and learnings are found.